|

The acronym originated at State Software, a company co-founded by Crockford and others in March 2001. Ĭrockford first specified and popularized the JSON format.

JSON grew out of a need for a real-time server-to-browser session communication protocol without using browser plugins such as Flash or Java applets, the dominant methods used in the early 2000s. History ĭouglas Crockford at the Yahoo Building (2007) The ECMA and ISO/ IEC standards describe only the allowed syntax, whereas the RFC covers some security and interoperability considerations. That same year, JSON was also standardized as ISO/ IEC 21778:2017. RFC 8259, published in 2017, is the current version of the Internet Standard STD 90, and it remains consistent with ECMA-404. But somehow, 'JAY-sawn' seems to have become more common in the technical community." Crockford said in 2011, "There's a lot of argument about how you pronounce that, but I strictly don't care." Standards Īfter RFC 4627 had been available as its "informational" specification since 2006, JSON was first standardized in 2013, as ECMA-404. The UNIX and Linux System Administration Handbook states that " Douglas Crockford, who named and promoted the JSON format, says it's pronounced like the name Jason. The first (2013) edition of ECMA-404 did not address the pronunciation.

s ə n/, as in ' Jason and The Argonauts '".

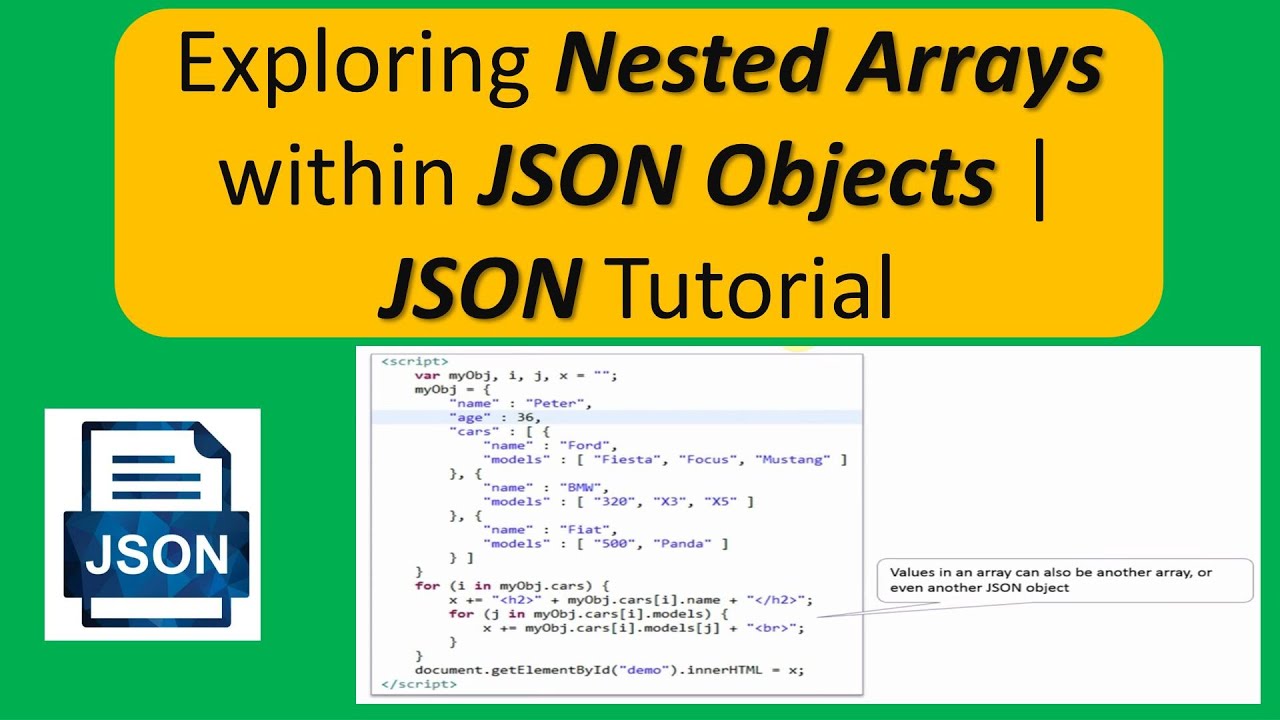

The 2017 international standard (ECMA-404 and ISO/IEC 21778:2017) specifies "Pronounced / ˈ dʒ eɪ. He and Chip Morningstar sent the first JSON message in April 2001. json.ĭouglas Crockford originally specified the JSON format in the early 2000s. It was derived from JavaScript, but many modern programming languages include code to generate and parse JSON-format data. JSON is a language-independent data format. It is a common data format with diverse uses in electronic data interchange, including that of web applications with servers. JSON ( JavaScript Object Notation, pronounced / ˈ dʒ eɪ s ən/ also / ˈ dʒ eɪ ˌ s ɒ n/) is an open standard file format and data interchange format that uses human-readable text to store and transmit data objects consisting of attribute–value pairs and arrays (or other serializable values). The Confluent platform supports all three options ( here) and there are serializers/deserializers available for at least Java. If this is a requirement, you might be better off using JSON Schema or Protobuf for JSON serialization/deserialization on Kafka since it allows for more specific validation and code generation. It is however not easy to only generate messages which make sense (notice the “age” field in my example). Because AVRO is not that specific, it is relatively easy to generate random data which conforms to the schema. AVRO schema are mostly used to help encode JSON messages going over Kafka streams (mostly from Java) and to allow some minimal validation. It is for example not easy (or even possible?) using AVRO to limit an int type to a certain min and max value or to limit a text field to a regular expression.

They are not specific like for example JSON Schema. AVRO schema are limited in how strict they can be.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed